Claude Opus seems to have become less intelligent recently.

More users are expressing a vague feeling that while the model doesn’t make obvious mistakes, it no longer feels as “smart” as before.

Responses are faster, reasoning is shorter, and it sometimes appears to skip essential steps, becoming more perfunctory.

If this were just an isolated case, users might suspect it’s their issue, but as similar feedback increases, it becomes more than just a feeling.

Videos have even surfaced online, joking that the current Opus resembles a fierce lion that has been declawed, revealing it to be just a dog.

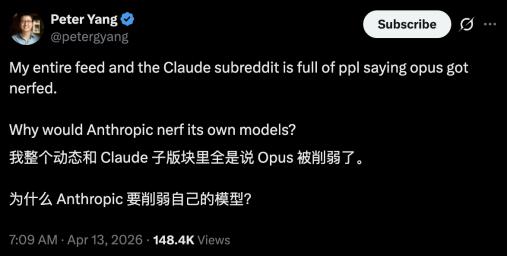

A more direct phrase has started circulating: Opus has been nerfed!

Is this true? If so, why would it be nerfed?

Decline in Reasoning Depth by 67%

Initially, only a few users complained that Claude Opus had “become lazy” or “wasn’t as smart as before.”

They might have noticed occasional low-level mistakes or fewer reasoning steps in complex tasks.

In a sense, collaborating with the model is similar to interacting with a person; when a previously cooperative “colleague” suddenly changes, it’s unsettling.

Most people’s first reaction is to doubt themselves: Is the prompt not well-written? Is the task inherently unsuitable? Surely, this is just a coincidence?

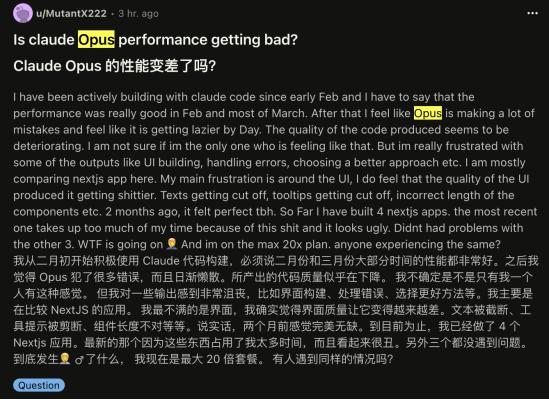

However, soon similar feedback began to appear densely in the Claude community on Reddit, with consistent descriptions:

Some users noted it no longer reads code carefully; others observed it provides answers faster but often omits crucial steps; and some found it more prone to “prematurely ending” long tasks, as if assuming the job was done.

When different users across various scenarios start repeating the same type of issues, it seems less like a mere “feeling off” and more like a change in behavior patterns.

In other words, it’s not that the feeling is wrong; the model is genuinely changing.

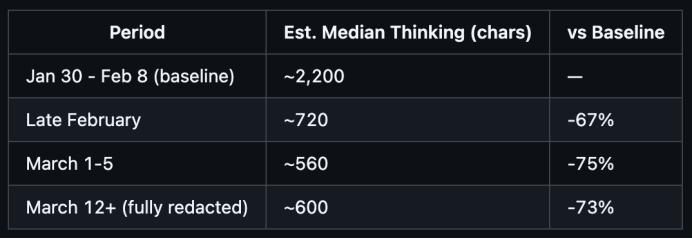

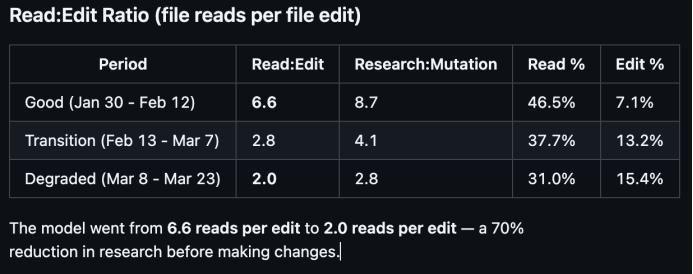

What escalated the discussion was this number: some users compared historical interaction logs while using Claude Code and found that the reasoning process in complex tasks had noticeably shortened, with reasoning depth declining by 67% since the February update.

(Reference link: https://github.com/anthropics/claude-code/issues/42796)

The author candidly explained that the 67% figure is based on an estimated correlation between signature length and the length of thought content, not a direct measurement. They also mentioned that logs from January were deleted, making baseline comparisons less accurate.

In contrast, what’s more compelling in the report are the behavioral changes. For instance, the ratio of read:edit (reading code vs. modifying code) dropped from 6.6 to 2.0; after March 8, 173 violations were captured by the stop hook, whereas previously there were none.

However, the precision of the numbers isn’t as crucial as the fact that they quantify an otherwise vague experiential issue into a trend that can be discussed.

Thus, a new term began to circulate in the community: “AI shrinkflation.”

Shrinkflation is an economic term referring to the reduction in size or quantity of a product while the price remains the same. Here, it directly means that the actual capabilities provided to users have diminished, even though the model still bears the same name.

The Problem Behind the Perfunctoriness

In contrast to the community’s intense reactions, Anthropic has not directly acknowledged that the “model has weakened.”

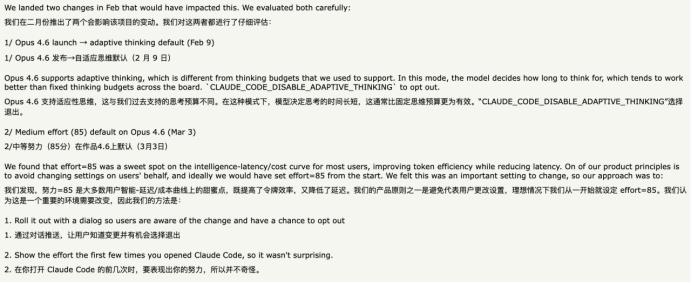

Boris, the head of Claude Code development, explained that these changes stem from adjustments at the system level, including changes in tool invocation methods, reasoning strategies, and resource allocation mechanisms, rather than a decline in the model’s inherent capabilities.

He provided an example: in Claude Code, some issues are believed to originate from the toolchain and system prompts, not the model itself. Meanwhile, under high load, the system needs to control computing power, tokens, and requests, which can also affect user experience.

In the latest version, Anthropic introduced a mechanism called “adaptive thinking,” where the model dynamically decides how much reasoning to use based on task complexity.

In other words, the model hasn’t deteriorated; it has begun to “decide for itself” how much computing power to employ.

(Reference link: https://news.ycombinator.com/item?id=47660925)

From an engineering perspective, this is a reasonable optimization: less thinking for simple tasks, more for complex ones, to enhance overall efficiency.

However, the problem is that efficiency optimization and capability reduction feel indistinguishable from the user experience.

When a model starts reading context less, providing faster answers, and prematurely ending tasks more frequently, users perceive this not as optimization but as perfunctoriness.

Moreover, this adaptive reasoning mechanism can indeed create discomfort from a subjective standpoint.

Using the interpersonal analogy again: why, after starting off well, does it feel like my concerns are no longer important later on?

This discomfort was quickly amplified by another change: before its release, Mythos attracted significant attention, with Claude Mythos Preview being directly labeled by Anthropic as the “next generation of capability leap,” demonstrating far superior abilities in coding and security tasks. Hence, it is being restrictively provided to a few institutions to reinforce “the world’s most critical software systems.”

When a “stronger new model” appears alongside an “old model that feels diminished,” a speculation often mentioned in the community begins to take shape: nerfing the old model to elevate the new one creates the impression of a significant upgrade.

This logic lacks direct evidence, but it is increasingly believed by users.

Models No Longer Stable

In reality, similar occurrences are not unfamiliar in AI.

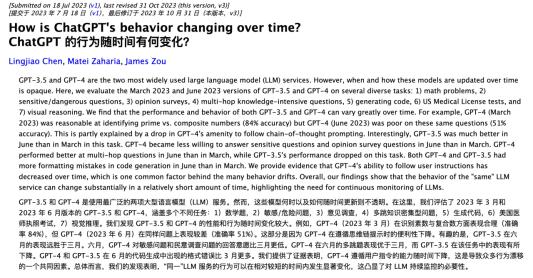

As early as 2023, research compared GPT-4’s performance at different times, finding that the same model exhibited noticeable changes in reasoning methods and output behaviors over a few months. These changes were later explained as a result of multiple factors: including adjustments in reasoning strategies, tightening of safety protocols, and optimizations for cost and response speed.

Setting conspiracy theories aside, if there is indeed a degree of resource bias, it is quite normal in the AI industry: whether OpenAI or Google, almost all companies prioritize optimizing the latest generation of models, while older models gradually become marginalized.

Computing power is both a cost and a productivity factor. When the upper limit of a new model’s capabilities is higher and its potential value greater, investing more resources into it is a rational choice.

In this process, the state of the old model will naturally change: being “downgraded,” reasoning depth compressed, and resource allocation readjusted… all of these can be understood as a kind of engineering trade-off.

However, understanding doesn’t equate to acceptance; the old model being altered without warning while the new model remains unavailable to the public is hard for anyone to accept.

From the user’s perspective, the most frustrating aspect isn’t the model’s “diminished intelligence” but its “instability.”

When a model transitions from a stable tool to a constantly changing system that makes its own “better adjustments” without prompts, version notes, or boundaries, it becomes problematic.

As a user, you don’t know when it changed, what exactly changed, or whether these changes will impact your ongoing tasks.

You can only feel that it has changed, and it’s not as useful as it once was.

At this point, a new model appears before you, seeming more stable and reliable, and perhaps easier to use.

Thus, the choice becomes nuanced: it seems you are no longer actively choosing the new model, but rather being pushed towards it by the changes in the old model.

Even if you know that the new model might someday become the next old model, potentially “optimizing” into an unpleasant version unexpectedly, the gap is already evident.

Comments

Discussion is powered by Giscus (GitHub Discussions). Add

repo,repoID,category, andcategoryIDunder[params.comments.giscus]inhugo.tomlusing the values from the Giscus setup tool.